# load packages

library(tidyverse)

library(tidymodels)

library(knitr)

library(patchwork)

library(GGally) # for pairwise plot matrix

library(corrplot) # for correlation matrix

# set default theme in ggplot2

ggplot2::theme_set(ggplot2::theme_bw())Multicollinearity

Announcements

Exam corrections (optional) due Tuesday, March 4 at 11:59pm on Canvas

Project proposal due TODAY at 11:59pm

Team Feedback (email from Teammates) due Tuesday, March 4 at 11:59pm

DataFest: April 4 - 6 - https://dukestatsci.github.io/datafest/

Computing set up

Topics

Multicollinearity

Definition

How it impacts the model

How to detect it

What to do about it

Data: Trail users

- The Pioneer Valley Planning Commission (PVPC) collected data at the beginning a trail in Florence, MA for ninety days from April 5, 2005 to November 15, 2005

- Data collectors set up a laser sensor, with breaks in the laser beam recording when a rail-trail user passed the data collection station.

# A tibble: 5 × 7

volume hightemp avgtemp season cloudcover precip day_type

<dbl> <dbl> <dbl> <chr> <dbl> <dbl> <chr>

1 501 83 66.5 Summer 7.60 0 Weekday

2 419 73 61 Summer 6.30 0.290 Weekday

3 397 74 63 Spring 7.5 0.320 Weekday

4 385 95 78 Summer 2.60 0 Weekend

5 200 44 48 Spring 10 0.140 Weekday Source: Pioneer Valley Planning Commission via the mosaicData package.

Variables

Outcome:

volumeestimated number of trail users that day (number of breaks recorded)

Predictors

hightempdaily high temperature (in degrees Fahrenheit)avgtempaverage of daily low and daily high temperature (in degrees Fahrenheit)seasonone of “Fall”, “Spring”, or “Summer”precipmeasure of precipitation (in inches)

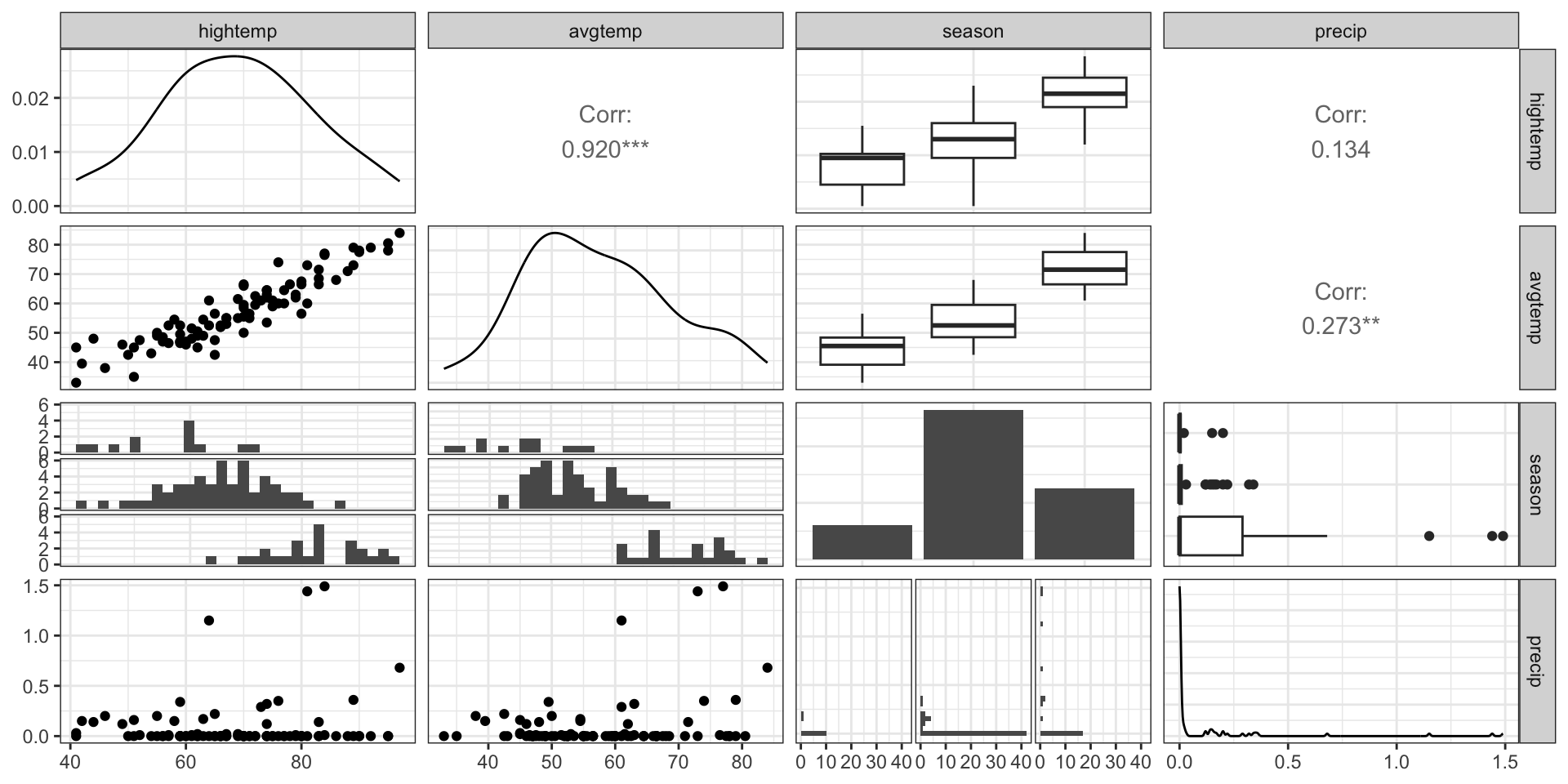

EDA: Relationship between predictors

We can create a pairwise plot matrix using the ggpairs function from the GGally R package

rail_trail |>

select(hightemp, avgtemp, season, precip) |>

ggpairs()EDA: Relationship between predictors

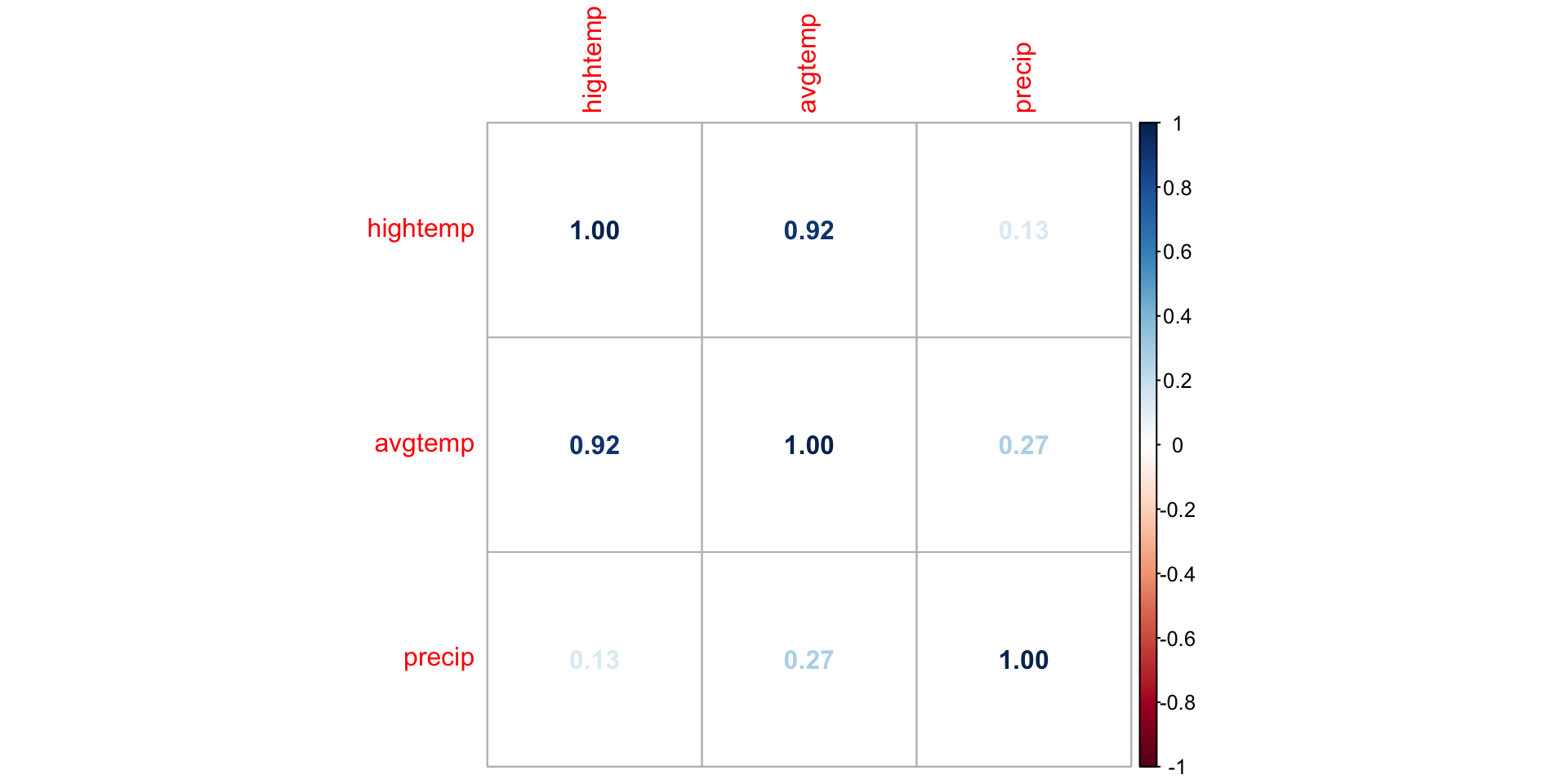

EDA: Correlation matrix

We can. use corrplot() in the corrplot R package to make a matrix of pairwise correlations between quantitative predictors

correlations <- rail_trail |>

select(hightemp, avgtemp, precip) |>

cor()

corrplot(correlations, method = "number")EDA: Correlation matrix

What might be a potential concern with a model that uses high temperature, average temperature, season, and precipitation to predict volume?

Multicollinearity

Multicollinearity

Ideally the predictors are completely independent of one another

In practice, there is typically some relationship between predictors but it is often not a major issue in the model

If there predictors are perfectly correlated, we cannot find values of \(\hat{\beta}_0, \hat{\beta}_1, \ldots, \hat{\beta}_p\) that best fit the model

If predictors are strongly correlated, we can find \(\hat{\beta}_0, \hat{\beta}_1, \ldots, \hat{\beta}_p\), but there may be other issues with the model

Multicollinearity: predictors are strongly correlated with each other

Source: Montgomery, Peck, and Vining (2021)

Sources of multicollinearity

Data collection method - only sample from a subspace of the region of predictors

Constraints in the population - e.g., predictors family income and size of house

Choice of model - e.g., adding high order or interaction terms to the model

Overdefined model - have more predictors than observations

Example: Issue with multicollinearity

Let’s assume the true population regression equation is \(y = 3 + 4x\)

. . .

Suppose we try estimating that equation using a model with variables \(x\) and \(z = x/10\)

\[ \begin{aligned}\hat{y}&= \hat{\beta}_0 + \hat{\beta}_1x + \hat{\beta}_2z\\ &= \hat{\beta}_0 + \hat{\beta}_1x + \hat{\beta}_2\frac{x}{10}\\ &= \hat{\beta}_0 + \bigg(\hat{\beta}_1 + \frac{\hat{\beta}_2}{10}\bigg)x \end{aligned} \]

Example: Issue with mulitcollinearity

\[\hat{y} = \hat{\beta}_0 + \bigg(\hat{\beta}_1 + \frac{\hat{\beta}_2}{10}\bigg)x\]

We can set \(\hat{\beta}_1\) and \(\hat{\beta}_2\) to any two numbers such that \(\hat{\beta}_1 + \frac{\hat{\beta}_2}{10} = 4\)

Therefore, we are unable to choose the “best” combination of \(\hat{\beta}_1\) and \(\hat{\beta}_2\)

Variance inflation factor

- The variance inflation factor (VIF) is a measure of the collinearity between predictor \(x_j\) and all other predictors in the model

\[ VIF_{j} = \frac{1}{1 - R^2_j} \]

where \(R^2_j\) is the proportion of variation in \(x_j\) that is explained by all the other predictors

Detecting multicollinearity

Common practice uses threshold \(VIF > 10\) as indication of concerning multicollinearity (some say VIF > 5 is worth investigation)

Variables with similar values of VIF are typically the ones correlated with each other

Use the

vif()function in the rms R package to calculate VIF

library(rms)

trail_fit <- lm(volume ~ hightemp + avgtemp + precip, data = rail_trail)

vif(trail_fit)hightemp avgtemp precip

7.161882 7.597154 1.193431 Application exercise

How multicollinearity impacts model

When we have perfect collinearities, we are unable to get estimates for the coefficients

When we have almost perfect collinearities (i.e. highly correlated predictor variables), the standard errors for our regression coefficients inflate

In other words, we lose precision in our estimates of the regression coefficients

This impedes our ability to use the model for inference

It is also difficult to interpret the model coefficients

Dealing with multicollinearity

Collect more data (often not feasible given practical constraints)

Redefine the correlated predictors to keep the information from predictors but eliminate collinearity

- e.g., if \(x_1, x_2, x_3\) are correlated, use a new variable \((x_1 + x_2) / x_3\) in the model

For categorical predictors, avoid using levels with very few observations as the baseline

Remove one of the correlated variables

- Be careful about substantially reducing predictive power of the model

Application exercise

Recap

Introduced multicollinearity

Definition

How it impacts the model

How to detect it

What to do about it